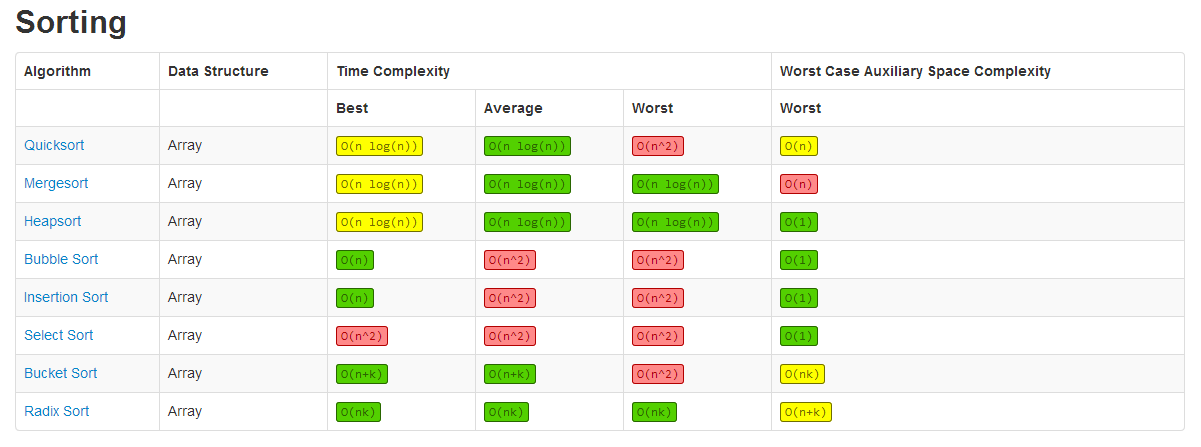

| Sorting Algorithms | Space complexity | Time complexity | ||

|---|---|---|---|---|

| Worst case | Best case | Average case | Worst case | |

| Insertion Sort | O(1) | O(n) | O(n2) | O(n2) |

| Selection Sort | O(1) | O(n2) | O(n2) | O(n2) |

| Smooth Sort | O(1) | O(n) | O(n log n) | O(n log n) |

| Bubble Sort | O(1) | O(n) | O(n2) | O(n2) |

| Shell Sort | O(1) | O(n) | O(n log n2) | O(n log n2) |

| Mergesort | O(n) | O(n log n) | O(n log n) | O(n log n) |

| Quicksort | O(log n) | O(n log n) | O(n log n) | O(n log n) |

| Heapsort | O(1) | O(n log n) | O(n log n) | O(n log n) |

- Algorithm Time Complexity Cheat Sheet Pdf

- Algorithm Time Complexity Cheat Sheets

- Algorithm Time Complexity Cheat Sheet Examples

- Data Structure Time Complexity Space Complexity Average Worst Worst. Array Sorting Algorithms Algorithm Time Complexity Space Complexity Best Average Worst Worst Quicksort O(n log(n)) O(n log(n)) O(n^2) O(log(n)). Big-O Algorithm Complexity Cheat Sheet Created Date.

- Now, it is time to know how to evaluate the Time complexity of an algorithm based on the order notation it gets for each operation & input size and compute the total run time required to run an algorithm for given n. Let us illustrate on how to evaluate the time complexity of an algorithm with an example: The algorithm is defined as: 1.

Big O notation cheat Sheet. Time complexity plays a crucial role in CP. Half of the problem you can crack just by seeing the constraints provided in the questions. The complexity of algorithms will decide whether you will get AC or TLE.

| Data Structures | Average Case | Worst Case | ||||

|---|---|---|---|---|---|---|

| Search | Insert | Delete | Search | Insert | Delete | |

| Array | O(n) | N/A | N/A | O(n) | N/A | N/A |

| Sorted Array | O(log n) | O(n) | O(n) | O(log n) | O(n) | O(n) |

| Linked List | O(n) | O(1) | O(1) | O(n) | O(1) | O(1) |

| Doubly Linked List | O(n) | O(1) | O(1) | O(n) | O(1) | O(1) |

| Stack | O(n) | O(1) | O(1) | O(n) | O(1) | O(1) |

| Hash table | O(1) | O(1) | O(1) | O(n) | O(n) | O(n) |

| Binary Search Tree | O(log n) | O(log n) | O(log n) | O(n) | O(n) | O(n) |

| B-Tree | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) |

| Red-Black tree | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) |

| AVL Tree | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) | O(log n) |

| n f(n) | log n | n | n log n | n2 | 2n | n! |

|---|---|---|---|---|---|---|

| 10 | 0.003ns | 0.01ns | 0.033ns | 0.1ns | 1ns | 3.65ms |

| 20 | 0.004ns | 0.02ns | 0.086ns | 0.4ns | 1ms | 77years |

| 30 | 0.005ns | 0.03ns | 0.147ns | 0.9ns | 1sec | 8.4x1015yrs |

| 40 | 0.005ns | 0.04ns | 0.213ns | 1.6ns | 18.3min | -- |

| 50 | 0.006ns | 0.05ns | 0.282ns | 2.5ns | 13days | -- |

| 100 | 0.07 | 0.1ns | 0.644ns | 0.10ns | 4x1013yrs | -- |

| 1,000 | 0.010ns | 1.00ns | 9.966ns | 1ms | -- | -- |

| 10,000 | 0.013ns | 10ns | 130ns | 100ms | -- | -- |

| 100,000 | 0.017ns | 0.10ms | 1.67ms | 10sec | -- | -- |

| 1'000,000 | 0.020ns | 1ms | 19.93ms | 16.7min | -- | -- |

| 10'000,000 | 0.023ns | 0.01sec | 0.23ms | 1.16days | -- | -- |

| 100'000,000 | 0.027ns | 0.10sec | 2.66sec | 115.7days | -- | -- |

| 1,000'000,000 | 0.030ns | 1sec | 29.90sec | 31.7 years | -- | -- |

Here is a cheat sheet for referencing to help determine the runtime of an algorithm.

Notes on Runtimes:

Runtime refers to the performance of an algorithm in terms of processing power.

Constant Time (1) - No matter how many elements we are working with, the algorithm/operation will always take the same amount of time. This the holy grail of algorithms.

Logarithmic Time (log(n)) - You have this if doubling the number of elements you are iterating over doesn’t double the amount of work. It's pretty safe to assume that searching operations are log(n).

Linear Time (n) - Iterating through all elements in a collection of data. If you see a for loop spanning from ‘0’ to ‘array.length’, you probably have ‘n’ or linear runtime. Most common for the ‘simpler’ algorithm problems like reversing a string or counting the number of vowels in a string.

Quasilinear Time (n * log(n)) - You have this if doubling the number of elements you are iterating over doesn’t double the amount of work. You can often assume that any sorting operation is n*log(n).

Quadratic Time (n ^ 2) - Every element in a collection has to be compared to every other element. A trick to help remember this is to think of ‘The handshake problem’. Imagine there is a group of people in a room and a new person walks into it. That person has to be introduced to all other people in the room and shake each person's hand. Everyone in the room has already shaken everyone other person's hand. So every element is interacting with all the other elements.

Exponential Time (2 ^ n) - If you add a single element to a collection, the processing power required doubles. Realistically you never want to propose this as a real solution because it is just too awful to compute.

Runtime refers to the performance of an algorithm in terms of processing power.

Constant Time (1) - No matter how many elements we are working with, the algorithm/operation will always take the same amount of time. This the holy grail of algorithms.

Logarithmic Time (log(n)) - You have this if doubling the number of elements you are iterating over doesn’t double the amount of work. It's pretty safe to assume that searching operations are log(n).

Linear Time (n) - Iterating through all elements in a collection of data. If you see a for loop spanning from ‘0’ to ‘array.length’, you probably have ‘n’ or linear runtime. Most common for the ‘simpler’ algorithm problems like reversing a string or counting the number of vowels in a string.

Quasilinear Time (n * log(n)) - You have this if doubling the number of elements you are iterating over doesn’t double the amount of work. You can often assume that any sorting operation is n*log(n).

Quadratic Time (n ^ 2) - Every element in a collection has to be compared to every other element. A trick to help remember this is to think of ‘The handshake problem’. Imagine there is a group of people in a room and a new person walks into it. That person has to be introduced to all other people in the room and shake each person's hand. Everyone in the room has already shaken everyone other person's hand. So every element is interacting with all the other elements.

Exponential Time (2 ^ n) - If you add a single element to a collection, the processing power required doubles. Realistically you never want to propose this as a real solution because it is just too awful to compute.

Big ‘O’ Notation

As software engineers, we can often think of Big 'O' notation as the runtime of the algorithm:

As software engineers, we can often think of Big 'O' notation as the runtime of the algorithm:

O(n) => linear

O(1) => Constant

O(n^2) => Quadratic

O(1) => Constant

O(n^2) => Quadratic

Algorithm Time Complexity Cheat Sheet Pdf

Algorithm Time Complexity Cheat Sheets

Identifying Runtime Complexity - Tips and Tricks

Note: These are not rules, they are generalizations. Unfortunately, there is no list to memorize that will tell you, 'these algorithms are always this'. It depends on each independent algorithm. So it really comes down to practicing and writing different algorithms and learning about their corresponding runtimes.

- Iterating with a simple for loop through a single collection, for example reversing a string. => Probably O(n)

- Iterating through half a collection. => Still O(n). There are no constants in runtime since these become meaningless as data sets become infinitely larger.

- Iterating through two different Visual studio code jquery. collections with separate for loops. For example, you want to reverse two strings both in the same function in two different for loops. You want to consider both n and m because one may be huge and the other much smaller, so a new variable is introduced. => O(n + m)

- Two nested for loops iterating over the same collection. => O(n^2)

- Two nested for loops iterating over different collections => O(n*m) [very similar to n^2]

- Sorting => O(n*log(n))

- Searching a sorted array => O(log(n))

Interview cake and leet code are some great resources for getting practice in with algorithms while learning about their runtimes.

Algorithm Time Complexity Cheat Sheet Examples

Happy coding!